Should Merchants Build a Dedicated Website for AI Agents?

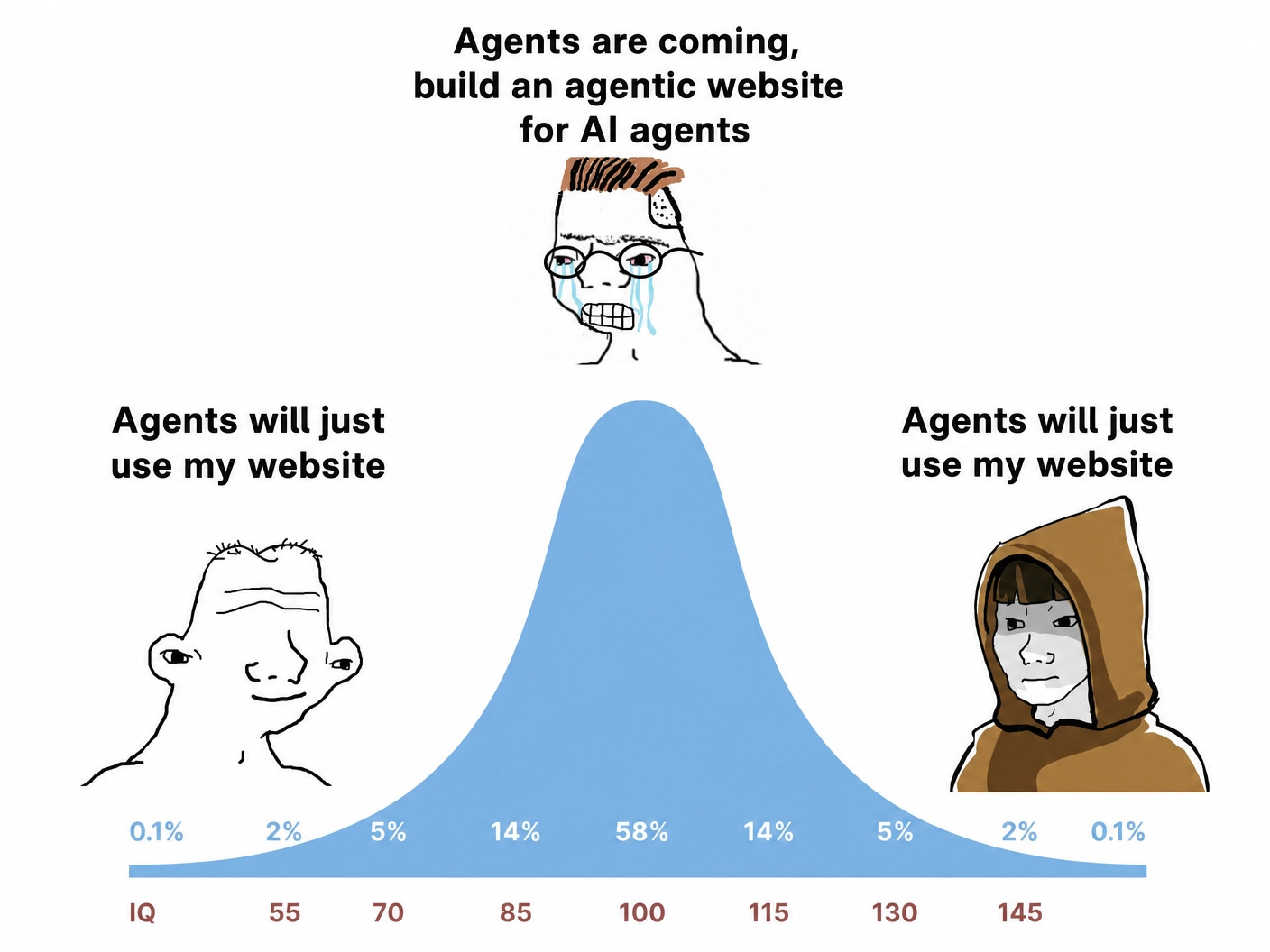

We’ve been getting this question a lot lately. So we sat down with the actual evidence. Honest answer: we’d not build it today.

TL;DR

Merchants keep asking whether they should build a separate AI-agent version of their site or subdomain (agents.brand.com, Markdown-for-Agents, etc.). Our answer today: probably not.

There’s no public evidence that Google, OpenAI, Anthropic, or Perplexity reward agent-optimized sites with better rankings, citations, or AI visibility. Existing bot-management tooling already provides most of the agent observability merchants actually need.

Cleaner sites do help browser agents operate faster, but the gains are incremental on top of a fundamentally slow and unreliable interaction model, with no published evidence of merchant-side business uplift. The more likely long-term path is agents calling structured tools directly via WebMCP/API-style interfaces rather than navigating websites like humans.

There are also modest downsides: analytics fragmentation, citation leakage, and surface drift.

This could change quickly if major AI platforms begin explicitly rewarding agent-efficient sites in discovery or rankings. Today, they do not.

We’ve been writing a lot lately about how AI is showing up on merchant sites — Gemini in Chrome, Skills and AI Mode browsing, WebMCP, Dark On-Site AI Activity. One question keeps showing up in our inbox: should we build a separate version of our site for AI agents?

Quick — who are we even talking about?

Three categories of AI traffic typically show up on a commerce site, each doing very different work:

Indexers — Persistent crawlers that build the catalogs so your store appears in answers.Googlebot, Bingbot, OAI-SearchBot, PerplexityBot, Claude-SearchBot.

Training crawlers — Bulk crawlers gathering content for model training. Separate conversation, separate policy decision. GPTBot, ClaudeBot, CCBot.

User-triggered agents — The new category. They fetch a page because a real user, in real time, asked an AI to do something on your site. ChatGPT-User, Perplexity-User, Claude-User, Google-Agent.

The agent-website pitch is only about category #3.

Two claims behind the pitch

When you press past the marketing, the case for an agent website rests on two specific claims:

You’ll get prioritized in AI discovery, citations, and answers.

Agents will execute tasks more reliably and faster on an agent-optimized surface.

Claim #1: “You’ll get prioritized in AI discovery and citations”

AI shopping engines will prefer your products. Perplexity and ChatGPT will cite you more often. You’ll rank higher in AI Overviews. You’ll be more discoverable to user-triggered agents. You’ll be ahead of the curve for the AI commerce wave.

There is no public evidence for any of it. Lighthouse’s new Agentic Browsing category is the first concrete signal from a major platform, but it is a diagnostic, not a ranking lever. Even there, the audit runs against your primary site, not a parallel agent surface. (And the closest thing to an “agent surface lite” in the audit is llms.txt — and treated as optional)

Google, OpenAI, Perplexity, and Anthropic have published a lot of bot identities, indexing rules, commerce protocols, partnership programs, ranking signals, AI feature documentation. None of them have published anything saying they prioritize content served from an agent-optimized subdomain in any of their AI surfaces. Not search results. Not AI Overviews. Not AI shopping experiences. Not user-triggered agent retrieval.

If a vendor is telling you an agent subdomain will increase your AI visibility or your citation rate, ask for the source. The claim is structurally an inference — “agents prefer cleaner content, so cleaner content must rank higher” — that nobody on the AI provider side has confirmed.

A smaller, ancillary claim often comes alongside this one: “you’ll also get better visibility into what agents are doing on your site.” This is real but not material. Cloudflare bot management already classifies and logs ChatGPT-User, Perplexity-User, Claude-User, Google-Agent, and the rest. Datadome, HUMAN, and Akamai’s bot products do the same. Most merchants asking this question are already paying for one of these and just haven’t looked at the relevant dashboard. What an agent subdomain adds is cleaner separation — agent traffic isolated on its own host, not mixed into your main analytics. Real, but trivial. You’re paying engineering cost to save a filter on a dashboard you already have.

So the central commercial claim has no evidence. The ancillary visibility claim is real but doesn’t move the needle.

Claim #2: “Agents will execute tasks faster on an agent-optimized surface”

This one is more interesting because it’s partially true. We should engage with it honestly before making the bigger argument.

Modern websites are genuinely hostile to agents. Popups, cookie banners, autoplay videos, floating chat widgets, ads, modals that appear two seconds after page load, infinite scroll, content that renders only after JS executes, lazy-loaded images and elements that aren’t in the initial DOM. All of it is incidental for a human (you scroll past, dismiss, ignore) and costly for an agent (it’s another DOM element to parse, another modal to handle, another visual region to recognize). Cleaning up the interaction surface does measurably help.

A recent benchmark from TollBit and KERNEL, across five commerce sites, showed browser-based agents complete tasks like add-to-cart 24-35% faster on agent-optimized surfaces, with measurably higher reliability.

it’s nowhere near consumer expectations. This is the structural problem.

On the optimized surface, median time-to-completion for an add-to-cart task still runs 70 to 150 seconds. The fastest individual runs hit 30-40 seconds. No one would sit through a session of an agent fumbling through the UI.

After three decades of building commerce on the internet, responsiveness is now measured in tens of milliseconds, not tens of seconds. In e-commerce, SaaS, and travel, a 100-millisecond delay at checkout doesn’t cost basis points — it costs conversions. Deloitte and Google found that a 0.1-second improvement in load time lifted conversion rates by 8.4% in retail and 10.1% in travel.The structural answer for agent execution at user-acceptable speeds is WebMCP — agents calling structured tool endpoints (search, addToCart) directly, in milliseconds, instead of automating a browser. We covered the architecture in depth in that earlier post. Different architecture, different ceiling. The Lighthouse Agentic Browsing category points the same way, three of its five audit groups check WebMCP (registered tools, declarative coverage on forms, schema validity), and a fourth measures Cumulative Layout Shift specifically because elements moving between identification and interaction is what breaks browser agents. Google’s own auditing tool is treating tool-calling as the primary path and DOM-scraping as the fragile one.

Small risks worth noting

None of these are major problems on their own, but with no clear upside today, they still matter:

AI systems may start relying on the agent surface as a public source of truth, even when it differs from the main site. And if search/index crawlers accidentally discover or index that surface, it can start becoming part of your public web footprint.

Two public versions of the same content can drift over time — prices, policies, inventory, or product details diverging between the main site and the agent version.

“For AI agents” is not a privacy boundary. Anything exposed on a public agent surface can still be fetched, cached, screenshotted, quoted, or scraped.

It adds another public surface that has to stay accurate, monitored, and operationally in sync with the main site.

Our verdict

If a merchant asks today: should we build an agent website? Our honest answer is no, not yet.

The central commercial claim — better discovery, more citations, prioritization in AI surfaces — has no public evidence behind it. The ancillary visibility claim doesn’t add anything beyond your existing bot management. The execution-speed claim is partially true but capped: even on the optimized surface, performance doesn’t reach consumer or prosumer levels, and there’s no published business uplift for merchants. With nothing material on the upside, even small risks don’t get offset. If your motivation is “I want agents to use my site at speeds users will tolerate,” focus on WebMCP.

Caveat: this analysis is for commerce sites. Content publishers face different tradeoffs.

What would change our mind

This is a “today” answer, not a “forever” answer.

There are real, documented efficiencies for agents reading and acting on cleaner surfaces. They just don’t accrue to merchants today. If at some point Google, OpenAI, or Anthropic publicly say “we will prioritize agent-efficient websites in our search results, AI shopping experiences, or user-triggered actions” — the math changes overnight. The work merchants put into an agent surface would suddenly have direct distribution payoff, the way structured product data already pays off in Google Shopping today. Lighthouse Agentic Browsing is the early form of that measurement infrastructure. The next question is whether Google ties the score to surfacing in AI Mode, AI Overviews, Gemini in Chrome, or Shopping — the way Core Web Vitals eventually fed Search ranking. If they do, the conclusion still won’t be “build a second site.” The reward, by Google’s own design, accrues to your primary domain.

When facts change, we change our take.

Want more on agentic commerce? We cut through the hype with deep, strategic analysis of the trends that actually matter. Subscribe below.

Strong piece. "No public evidence" is the line that should land hardest.

Building for agents has merit, but it isn't a subdomain problem or a content problem. It's a contract problem. agents.brand.com isn't the answer, and shipping a fresh llms.txt every release isn't either. That's the old SEO instinct (publish more, let crawlers sort it out) with an agentic coat of paint. Agents don't want more content to read. They want a contract they can call.

That's why UCP actually looks legit. The point of publishing at /.well-known/ucp isn't handing an agent another doc to parse. It's exposing your capabilities, schemas, and signed actions in one place that every UCP-aware agent already knows to check.

The real work isn't parallel storefronts. It's fixing the PDPs you already have so the data shows up cleanly through that contract. Clean product schema. Live inventory and price. Capability declarations that match what the agent is trying to do. That's an agentic storefront that does something. llms.txt is a sticky note.

I don't think there is one "system" in place but is there an Agent Score that if you built an agent and ran it across a sample size would give companies insight in where they are falling short, discovery, conversion, checkout, etc?